What virtual try-on actually delivers in 2026

"Virtual try-on" is a search term that surfaces two different products, but eight of the ten most-clicked results in 2026 sell the same thing: an AI model image generator. You feed a flat-lay, hanger shot, or mannequin frame into the tool. The tool returns a clean, catalog-ready image of the garment on a synthetic AI model. The output uploads anywhere — your DTC Shopify storefront, your wholesale lookbook PDF, your Etsy, eBay, Poshmark, Mercari, Depop, or Amazon listing, your Meta or TikTok ad creative. The shopper sees a styled on-model photo and clicks add-to-cart at the rate they'd click a real studio shoot.

The alternative product — a shopper-facing try-on widget where customers upload a selfie — is a smaller, separate category (Google Shopping try-on, ASOS in-app, a few Shopify-native diffusion apps). It serves a different funnel position. We address it briefly toward the end of this guide. Almost everyone reading "best virtual try-on tools 2026" is shopping for the first product: an AI model image generator that replaces the photoshoot.

Three structural shifts made this category usable for every part of the apparel and jewelry ecosystem in the last 18 months. First, diffusion models trained on millions of garment-and-body pairs now handle drape, pattern, and structure well enough that the average shopper can't tell AI from studio — good enough for editorial-adjacent brand work, not just budget catalog listings. Second, web UIs replaced the API-only experience that locked early tools to engineering teams, opening the workflow to brand marketing leads, founders, and agency producers without a developer. Third, per-image cost dropped from $1+ to under $0.10 in 2024–2025, putting the workflow inside the per-SKU cost target of both DTC brands and margin-conscious resellers.

The eight tools in this guide all generate AI model images from one product photo. They differ in input flexibility, model library size and diversity, fabric fidelity, output styles, and price. We evaluate each against those five dimensions, then run a side-by-side comparison so you can pick the right one for your category and volume.

Five dimensions to evaluate any model image generator

Every AI model image generator claims it's the best at what it does. The honest evaluation reduces to five dimensions — get any one wrong and the tool fails for your workflow.

| Dimension | What it means | Why it matters |

|---|---|---|

| 1. Input flexibility | What source photos does the tool accept — flat-lay only, hanger, mannequin, phone snap, supplier image? | If the tool needs studio-grade input, you're back to needing a studio |

| 2. Model library | How many model templates, what diversity (ethnicity, body type, age, pose), can you upload your own? | Defines whether the output matches your brand persona |

| 3. Fabric fidelity | Does the tool preserve fabric texture, pattern alignment, and structural detail on the AI model? | Patterned and structured garments are where weak tools fall apart |

| 4. Output styles | White-background catalog only, lifestyle scenes, editorial, ghost mannequin alongside on-model? | Different marketplaces need different image styles (Amazon main vs Etsy gallery) |

| 5. Per-image cost | Free tier credits, dollars per image, monthly plan break-even point | Determines whether the tool fits your unit economics at volume |

One dimension worth its own emphasis: ghost mannequin as a complementary output. On-model lifestyle imagery and white-background ghost mannequin imagery are two different listing slots. Amazon's main image rules require pure white background with the garment filling the frame — that's a ghost mannequin shot, not an on-model render. Etsy's main image converts best with an on-model render, but the gallery wants a ghost mannequin variant for clarity. A tool that ships both saves you switching between platforms; tools that ship only on-model leave you needing a second tool for marketplace main images.

Try the marketplace-ready output first. A single flat-lay phone photo returns a lifestyle on-model image with templated pose, persona, and scene — and a matching ghost mannequin shot from the same source. Try Snappyit AI Fashion Model free →

1. Snappyit — AI Fashion Model as the lead output, plus ghost mannequin and jewelry on-model

Snappyit AI Fashion Model serves the full range of fashion businesses — DTC apparel brands launching seasonal collections, Shopify and BigCommerce storefronts, jewelry labels, marketplace resellers on Etsy, Poshmark, eBay, and Amazon Handmade, plus agencies producing catalog imagery for client brands. The Fashion Model engine is the lead product: feed it one garment photo on a flat surface and it returns a full on-model render with a templated persona, pose, and scene. The same source image can also drive a ghost mannequin shot or a jewelry on-model render, so a single upload covers every model image type a brand or seller's listing needs.

Input flexibility. Phone flat-lay, hanger shot, mannequin frame, studio shot, or supplier image all work as a Fashion Model source. Snappyit tolerates wrinkles in the source photo and cleans them on the way to the on-model render, which handles both the messy phone snaps marketplace sellers shoot and the cleaner pre-production samples that brands and agencies work with. Background does not need to be pre-cleaned before uploading to Fashion Model.

Model library. The AI Fashion Model template gallery is the deepest of the three modes — personas covering ethnicity, body type, age range, and styling context, paired with scene templates from studio backdrop to outdoor lifestyle. The Fashion Model mode is the one that maps to "virtual try-on" search intent. The two supporting modes — Ghost Mannequin (3D-worn shape, no visible model) and Jewelry Model (rings on hands, earrings on ears, necklaces on necks) — round out the three model image generation outputs from one upload.

Fabric fidelity. Snappyit Fashion Model holds up well on knits, cotton tees, dresses, button-downs, hoodies, jackets, and pants — the cotton-to-mid-weight-fabric range that covers most marketplace listings. Print preservation on graphic tees and patterned dresses is reliable through the Fashion Model render. The hard cases — sheers, lace, complex 3D folds — get the same hit-or-miss treatment any 2026 AI model image generator delivers.

Output styles. Fashion Model is the headline output — a lifestyle on-model render that matches what Etsy, Poshmark, Shopify, and Mercari main images convert best with. The same workflow also exports a ghost mannequin variant for Amazon's white-background main-image rule, so you don't switch tools between marketplaces. Most single-output competitors leave you needing a second tool to cover both slots; Snappyit ships both from the same source.

Per-image cost. Credit-based with a free starter tier. Volume sellers can batch-process a season's inventory through Fashion Model in one sitting at single-digit cents per image at scale tier.

Best for. DTC apparel brands, Shopify and BigCommerce storefronts, jewelry labels, capsule-collection launches, agencies producing client catalogs, plus Etsy makers, Poshmark closets, eBay drops, and Amazon Handmade sellers — any fashion business whose listing strategy leads with a lifestyle on-model image and falls back to ghost mannequin for marketplace main-image requirements.

Try Snappyit AI Fashion Model free →

2. Claid — broad model library, enterprise API

Claid.ai takes a single clothing photo and renders it on any of 100+ pre-built virtual models. The platform is API-first, positioned for enterprise teams running automated catalog ingestion at scale.

Input flexibility. Clean product photos work best — supplier-grade or above. Claid is less forgiving of messy phone snaps than Snappyit and Botika; the AI assumes the input has been pre-cleaned of distracting backgrounds.

Model library. The strongest dimension of the Claid offering. 100+ pre-built virtual models cover ethnicity, body type, age, and pose. You can also upload your own model templates — useful if you've shot brand-model imagery and want to keep consistency across SKUs without rebooking the model for every new piece.

Fabric fidelity. Solid on standard apparel. Pattern preservation across the larger model library is consistent because the underlying mapping is trained on a fixed pose set rather than re-inferring per session.

Output styles. On-model only. No native ghost mannequin output. For marketplaces where a clean white-background catalog shot is the main image (Amazon, parts of Shopify), you'd pair Claid with a separate ghost-mannequin tool — which is friction in the workflow.

Per-image cost. API-volume pricing, enterprise-leaning. Makes economic sense above thousands of garments per month for established brands and large catalogs; less so for small teams or solo sellers doing 50–200 SKUs.

Best for. Mid-to-large fashion ecommerce teams with an engineering function and a defined model persona.

3. FASHN.ai — research-grade pattern fidelity, API-only

FASHN.ai ships a model pre-trained on around 18 million try-on examples — the largest published training set in this category. The result is strong garment-to-body geometry mapping, with patterns, prints, and asymmetric cuts surviving the AI model image render better than on lower-data competitors.

Input flexibility. Accepts flat-lay, hanger, and mannequin source images. Quality is best with a clean background, but the model is forgiving of imperfect input.

Model library. Modest pose library compared with Claid or Snappyit. FASHN.ai's value isn't a deep model template gallery — it's per-image fidelity on whatever pose is being rendered. If you need a specific persona or scene, you'll be working with a narrower set of options than competitors.

Fabric fidelity. The strongest dimension. If your catalog is full of vintage patterns, intricate prints, complex structure (corsets, jumpsuits, layered tops), or asymmetric cuts, FASHN.ai handles them more gracefully than tools trained on cleaner studio data. This is where the 18M training pairs pays off.

Output styles. Single-pose on-model only. No ghost mannequin, no jewelry, no batch lifestyle scenes — the tool is laser-focused on one job (garment-on-model rendering) and does it very well.

Per-image cost. Around $0.075 per image at the developer tier. API-first; no polished web UI for non-technical users. Brands and sellers without an engineering team either glue it together with no-code tools (Zapier, Make) or wait for a self-serve product to ship.

Best for. Apparel brands with engineering bandwidth running pattern-heavy or vintage catalogs.

4. Photoroom — mobile-first model image generation, generic templates

Photoroom is a general-purpose AI photo editing platform with 150+ million downloads. Model image generation is one of several features bundled alongside background removal, AI product staging, and batch processing. It's the broadest tool in this list — not the deepest.

Input flexibility. Mobile-first workflow optimized for phone photos. The iPhone app accepts whatever you can snap; the AI does the cleanup. Strong on the "I just want to ship this listing" workflow.

Model library. Generic templates reused across millions of brands. Your listings will look similar to everyone else's listings using Photoroom — which is the price of using a tool with 150M downloads. You cannot upload custom model templates.

Fabric fidelity. Adequate on cotton, denim, and simple solids. Lags behind Snappyit, FASHN.ai, and Claid on knits, sheers, and structured garments. Best treated as the starter model image generator, not the studio replacement.

Output styles. Limited ghost mannequin output plus on-model rendering. Photoroom's ghost mannequin is reasonable but not as clean as Snappyit or SellerPic on complex garments.

Per-image cost. Bundled into Photoroom's Pro plan starting around $14/month — economical if you already use the tool for background removal or social-image batching.

Best for. Founders, brand operators, and sellers who already use Photoroom for background removal and want a one-app workflow, accepting that the model output is generic.

5. Botika — mannequin-shot conversion specialist

Botika turns packshots, flat-lays, and mannequin shots into AI model images by swapping in models, poses, and backgrounds. It markets itself as a "photo fix" workflow rather than a try-on tool — useful framing because it's built to clean up existing photoshoot output, not skip the photoshoot entirely.

Input flexibility. Highest in this list for one specific input type: mannequin shots. If you have an archive of mannequin photography and want to convert those frames to on-model images without re-shooting, Botika is purpose-built. Also accepts flat-lay and packshot.

Model library. Pose-swap and background-swap layered on top of model swap. One mannequin source frame produces multiple lifestyle variants — same garment, different pose, different scene — which is unique among 2026 model image generators.

Fabric fidelity. Strong on the categories Botika trained on (apparel, especially tops and dresses). The mannequin-to-on-model conversion preserves drape better than tools that start from a flat photo, because the source already has a 3D shape.

Output styles. On-model with multiple pose and scene variants. No ghost mannequin output; that's an explicit out-of-scope choice given the tool's "photo fix" positioning.

Per-image cost. Subscription-based. The workflow assumes you already have a baseline photoshoot, so the value is per-pose multiplied across each garment.

Best for. Brands transitioning from mannequin photography to on-model imagery without re-shooting their archive.

6. Modelia — outfit combination on a styled AI model

Modelia is an all-in-one AI fashion visual platform whose standout feature is outfit combination — drag and drop any top with any bottom and accessories to create complete styled looks on a single AI model. For catalog teams running collections rather than single SKUs, that's a real differentiator.

Input flexibility. Accepts standard product photography input. Like Claid, it's friendlier to clean studio-grade source than messy phone photos.

Model library. Strong model library plus the unique outfit combination layer on top. You can render the same AI model wearing twelve different combinations of pieces from your collection, which is the only tool in this list that lets you do that natively.

Fabric fidelity. Good on the categories Modelia trained on. The outfit combination step can occasionally introduce small artifacts at garment junctions (where a top meets a bottom, where a jacket meets a shirt) — most of these are invisible at marketplace thumbnail resolution.

Output styles. Fashion-editorial styled looks rather than marketplace-catalog cleanliness. Etsy and eBay shoppers expect a cleaner, less stylized image; Shopify and DTC brand sites tolerate Modelia's editorial look better.

Per-image cost. Monthly subscription with per-image credit consumption. Mid-tier pricing among the tools in this list.

Best for. DTC fashion brands with a coordinated collection mindset rather than a single-SKU mindset.

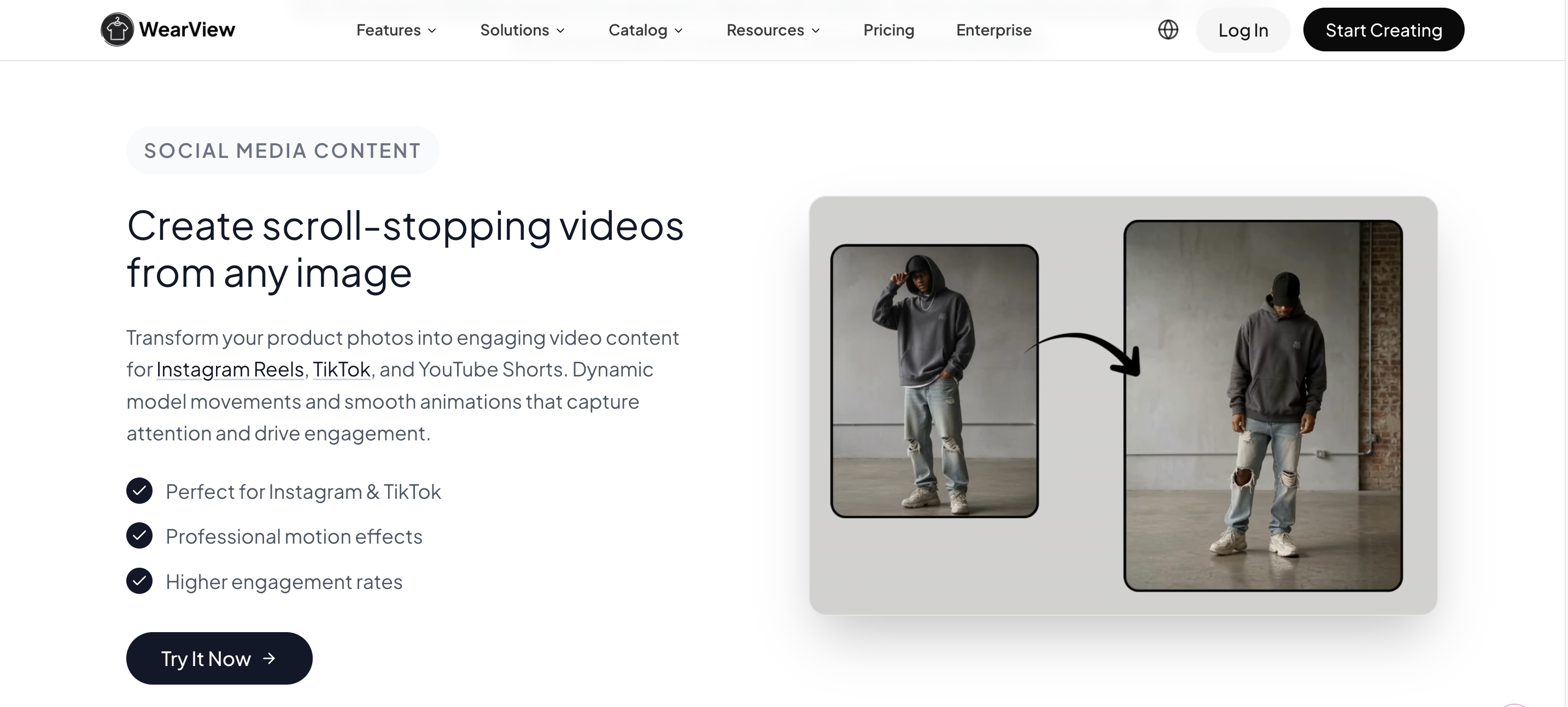

7. WearView — fashion model template gallery by ethnicity, body type, and pose

WearView sits in the same brand-side AI model image generation category, with a clear differentiator: a deep template library indexed by ethnicity, body type, and pose. For brands building a multi-persona catalog (different model represents different sub-line), WearView gives you the most explicit persona selector among 2026 tools.

Input flexibility. Accepts flat-lay and hanger source photos. Standard clean-input expectations; phone snaps work, supplier images work, mannequin frames are less of a focus.

Model library. The strongest dimension. Templates are sortable by ethnicity (Asian, Black, Latinx, white, mixed), body type (slim, midsize, plus, athletic), age range (teen, 20s, 30s, 40s+), and pose (standing, walking, three-quarter, seated). For a brand that wants distinct model imagery per sub-collection, WearView delivers without hiring real models.

Fabric fidelity. Solid on standard apparel. Doesn't push the envelope on complex patterns the way FASHN.ai does, but it doesn't fail on them either.

Output styles. On-model primary, with selected lifestyle scenes per template. No native ghost mannequin output.

Per-image cost. Subscription-based with a free entry tier. Pricing scales with output volume per month.

Best for. Brands selling across multiple personas or sub-collections who need consistent model imagery per line.

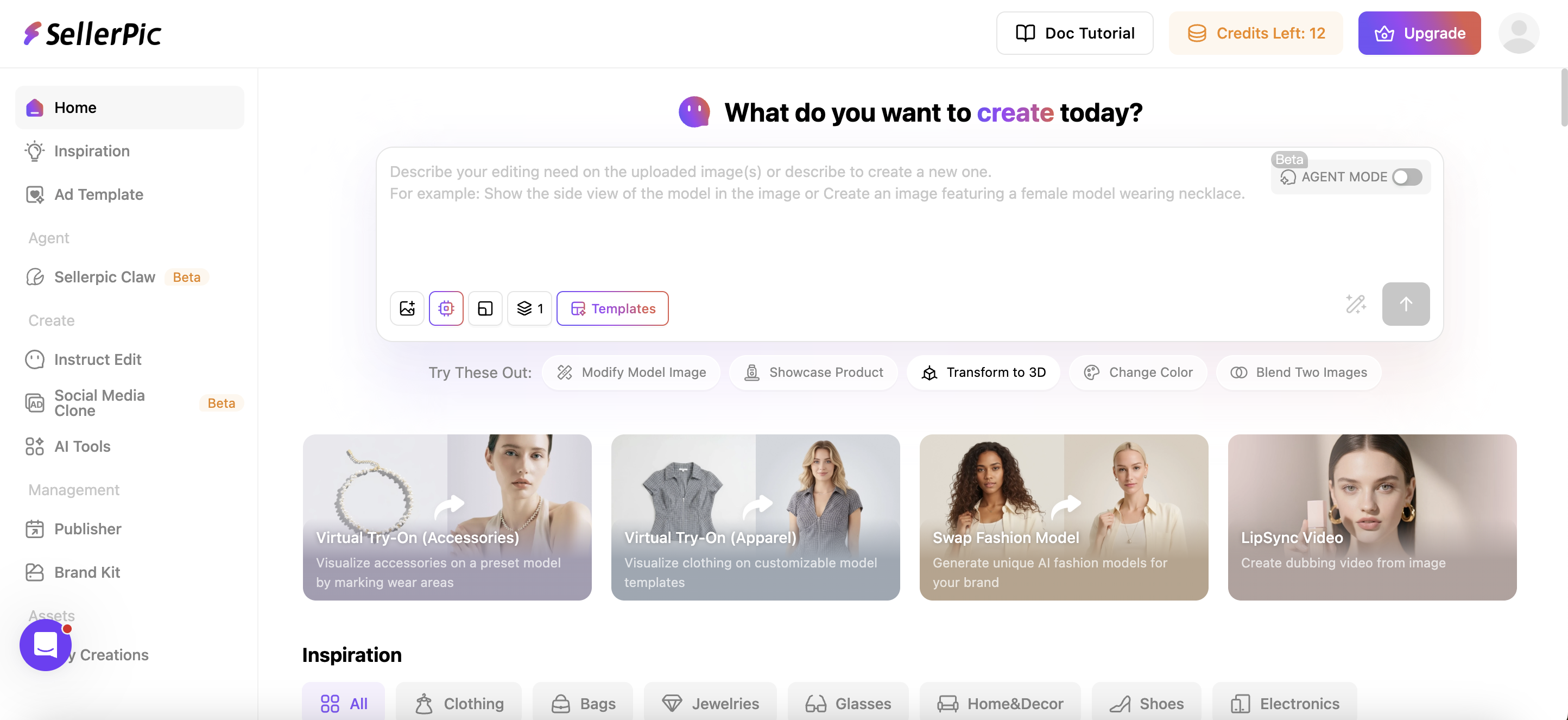

8. SellerPic — multi-feature fashion suite with on-model and ghost mannequin

SellerPic is a multi-feature fashion AI suite that combines on-model try-on, ghost mannequin, short-form video, background removal, and jewelry try-on under one dashboard. The positioning is closest to Snappyit among 2026 tools — both ship multiple output styles from one source image.

Input flexibility. Flat-lay, hanger, mannequin, and supplier imagery all accepted. Tolerant of phone-grade source.

Model library. Curated model template selection covering common persona buckets. Less granular than WearView's ethnicity-body-type-pose matrix; more curated than Photoroom's generic templates.

Fabric fidelity. Good on cotton, denim, and standard apparel. Less stress-tested on edge cases (sheers, lace, structured outerwear) than FASHN.ai or Snappyit, in our reviews.

Output styles. Ghost mannequin + on-model + short-form video + jewelry try-on. Of the 2026 tools, only SellerPic and Snappyit bundle ghost mannequin and on-model rendering in the same workflow.

Per-image cost. Free starter tier plus monthly plans under $30 at the entry paid tier. Reasonable for small brands, founders, and solo sellers.

Best for. Sellers who want one tool for ghost mannequin, on-model, and lightweight video — comparable workflow to Snappyit, slightly different output style.

A note on shopper-facing try-on widgets

Most of this guide focuses on AI model image generation — software that produces static catalog images. There's a smaller, separate category of shopper-facing try-on widgets where a customer uploads a selfie and sees the garment on themselves inside the storefront. Google Shopping try-on, ASOS's in-app try-on launched February 2026 with roughly 10,000 products, and Shopify-native diffusion apps like Genlook sit in this category. Multi-category SDKs like Banuba handle accessories, glasses, makeup, and jewelry try-on across face/head.

Shopper-facing try-on is a real category but answers a different question: it's a conversion-rate optimization layer on a storefront that already has product traffic. If you're shopping for AI model image generation to populate listings, you're in the right category with the eight tools above. Add shopper-facing try-on later, once you have a Shopify store doing $30k+/month and a return rate worth attacking.

The structural connection: clean catalog images from a model image generator become the input layer for platform-level try-on engines. As Google, Walmart, and Amazon roll out shopper-side try-on across their indices in 2026–2027, the brands with the cleanest AI on-model and ghost mannequin imagery surface most prominently.

Side-by-side: what each tool ships per dimension

Stacking the eight model image generators against the five evaluation dimensions clarifies which tool fits which seller. Each scorecard cell summarizes one tool against one dimension — the longer comparison sits in the individual sections above.

| Tool | Input | Model library | Fabric | Output styles | Cost |

|---|---|---|---|---|---|

| Snappyit | Phone-tolerant | Curated, garment-typed | Strong | Ghost mannequin + on-model + jewelry | Free tier + credits |

| Claid | Clean input | 100+ models, upload custom | Solid | On-model only | Enterprise API |

| FASHN.ai | Flexible | Modest | Best in class | On-model only | ~$0.075/img API |

| Photoroom | Mobile-first phone | Generic templates | Adequate | Light ghost + on-model | ~$14/mo Pro |

| Botika | Mannequin specialist | Pose + bg swap | Strong on drape | On-model variants | Subscription |

| Modelia | Clean input | Strong + outfit combo | Good | Editorial styled | Subscription |

| WearView | Flat-lay / hanger | Best persona matrix | Solid | On-model only | Free tier + subscription |

| SellerPic | Phone-tolerant | Curated | Good | Ghost + on-model + video + jewelry | Free tier + ≤$30/mo |

A brand and seller workflow that actually ships

Here's the workflow we see brands, agencies, and sellers use most often when going from a single garment photo to a multi-channel catalog — whether the destination is a DTC Shopify storefront, a wholesale lookbook, or a multi-marketplace listing.

- Capture one source photo. Lay the garment flat in natural window light, or hang it on a padded hanger against a clean wall. Phone camera at 1:1 ratio, 1500px+ on the long edge. Jewelry: shoot on a neutral surface, top-down, with even daylight.

- Run AI Fashion Model first. This is the converting hero image on Etsy, Poshmark, Shopify, and Mercari — a lifestyle on-model render that lets a shopper picture the garment worn. Snappyit AI Fashion Model takes one flat-lay phone photo and outputs the on-model render in 60 seconds, with persona and scene templates you can swap per listing.

- Export a ghost mannequin variant from the same source. The 3D-worn shape covers Amazon's white-background main-image rule and reinforces fit on the secondary slots in your Etsy and eBay galleries. Snappyit Ghost Mannequin reuses the upload — no second shoot, no second tool.

- For jewelry, add a try-on render. Hand for rings, ear for earrings, neck for pendants. Snappyit Jewelry Model handles all three.

- Resize per marketplace. Etsy 2700×2700, Amazon 1000×1000+, eBay 1600×1600, Poshmark 1500×1500. Use the free image resizer to batch-crop in one pass.

- Cross-list. Same image set works on every channel. Update title, description, and price per platform.

End-to-end this is 4–6 minutes per garment versus 30–60 minutes for a hand-shot photo + retouching workflow, and it scales linearly as you add SKUs.

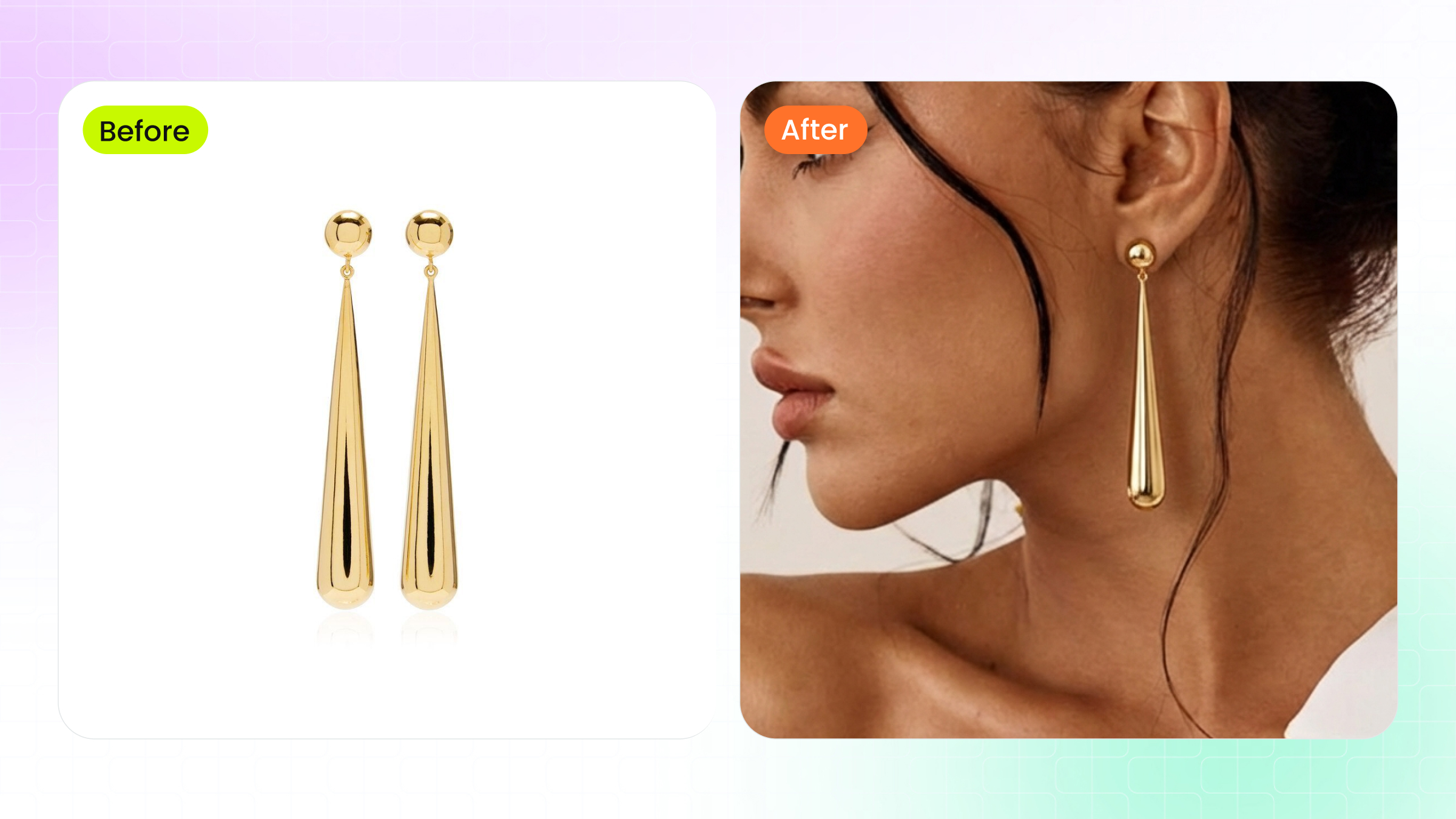

A note on jewelry try-on specifically

Jewelry is the category where virtual try-on delivers the highest ROI per image, because traditional jewelry photography is brutal: a single ring shot requires polarized lighting, a clean white sweep, dust-free macro focus, hand model booking, and 20+ minutes of retouching per frame. AI try-on collapses this to one upload.

Snappyit's jewelry pipeline is two-stage: Jewelry Retouch first (clean the supplier image — remove dust, reflections, color cast) and then Jewelry Model (place the piece on a hand, ear, or neck template). The two-stage flow handles the awkward source images that come back from Alibaba, Faire, or your own studio without re-shooting.

Banuba is the alternative for jewelry brands building a shopper-facing experience (let your customer try the ring on her own hand via camera). For static catalog imagery, Snappyit's pipeline is faster and shipped-out-of-the-box.

Skip the dust and the hand model. Upload a supplier photo of a ring, earring, or necklace and Snappyit returns a clean retouched image plus an on-model render. Try Snappyit Jewelry Try-On free →

Mistakes to avoid when buying a virtual try-on tool

Most virtual try-on purchases that fail are pre-decided wrong before the demo. Five mistakes account for the majority of "we bought it and never used it" stories from apparel and jewelry sellers:

- Buying for category instead of for stage. An enterprise virtual try-on API with a $5k/month minimum is wasted on a brand doing 50 orders/month. Match the tool to your current revenue tier; you can always upgrade. Snappyit, Photoroom, and Modelia all have free starter tiers that match early-stage volume.

- Optimizing for the wrong output type. A brand or seller who needs ghost mannequin first picks an on-model-only tool and ends up with images that don't match the Amazon or Etsy catalog standard. Confirm the tool generates the output type that matches every channel you sell on — DTC storefront, wholesale catalog, and marketplace listing all have different main-image conventions.

- Not testing on your own products. The demo gallery always looks perfect; the real test is running 5–10 of your own garments through the tool and checking output quality across your actual catalog (different fabrics, patterns, structure). Any virtual try-on platform worth paying for offers free credits exactly for this validation step.

- Underestimating the workflow integration cost. An API tool with $0.075/image looks cheaper than a SaaS at $30/month — until you account for the developer hours to build the integration. Founders, brand marketing leads, and agency producers almost always come out ahead with a polished web UI over a cheaper API.

- Picking a tool with poor jewelry support if you sell jewelry. Most virtual try-on tools optimize for apparel first. If half your catalog is jewelry, confirm the tool handles rings, earrings, and necklaces — Snappyit and Banuba are the strongest, others are weaker.

The validation step that solves all five mistakes: pick the 5–10 products in your catalog that vary the most (one in each major fabric / structure / category), run them through the free tier of two or three virtual try-on tools, and compare outputs. The decision becomes obvious in 30 minutes. Don't sign a paid plan without this test.

Where virtual try-on is heading in 2026 and beyond

Three structural shifts are reshaping the virtual try-on landscape that buyers should price into their tool selection over the next 18 months:

Platform-native try-on becomes baseline. Google Shopping, ASOS, and (incoming) Amazon and Walmart will host shopper-facing virtual try-on as a free platform feature. The competitive advantage shifts from "do you have a try-on widget" to "is your product imagery good enough to fuel platform try-on." This makes brand-side image quality more important, not less — clean ghost mannequin and AI on-model outputs are the input layer to platform try-on indices.

Jewelry try-on closes the gap to apparel. Jewelry has lagged apparel in virtual try-on accuracy because of scale precision (a 5mm earring on a face has less margin for error than a tee on a torso). 2026's training data, especially around hand and ear keypoint detection, has narrowed the gap. By 2027, jewelry try-on will be as routine as apparel ghost mannequin.

Video try-on becomes the new format. Static images convert; video converts harder. Virtual try-on tools are starting to ship motion outputs — a 5-second loop of the model walking, turning, or holding a pose. Snappyit's image-to-video and fashion video outputs are early entries; expect this to become the default by mid-2027.

If you're buying a virtual try-on tool today for a 12+ month horizon, prioritize platforms that ship both static and video outputs — Snappyit, Modelia, and a few specialist video try-on tools. Tools locked to single-frame output will look dated within a year.

FAQ

What is an AI fashion model image generator and how does it differ from a virtual try-on widget?

An AI fashion model image generator takes a product photo — a flat-lay, hanger shot, or mannequin frame — and produces a catalog-ready image of the garment on a synthetic model. The output is a static image you upload to your Etsy, eBay, Shopify, or Amazon listing. A virtual try-on widget is a shopper-facing tool that runs on a customer's selfie inside a storefront. Most people searching "virtual try-on tools" actually want the first product: software that replaces a photoshoot, not a checkout widget. Snappyit, Claid, FASHN.ai, Botika, Modelia, Photoroom, WearView, and SellerPic all fall in the model image generator category.

What kind of source photo gives the best AI model image output?

A clean flat-lay or hanger shot on a plain background with even daylight is the strongest input. The AI uses the source to infer garment color, drape, and structure — anything that obscures those (deep shadow, busy background, harsh flash, severe wrinkles) carries through into the output. Snappyit, FASHN.ai, and Botika all tolerate phone photos as long as the garment is fully visible and roughly centered. Mannequin shots are accepted by Botika in particular because its pipeline is built around the mannequin-to-on-model conversion.

How realistic are 2026 AI fashion model images compared to real photoshoots?

For marketplace and Shopify catalog listings, AI model images from Snappyit, Claid, FASHN.ai, and Modelia are routinely indistinguishable from studio photography to the typical shopper. The gap remains on editorial campaigns where styling, hair, makeup, and on-set art direction are part of the product story — those still need real photoshoots. For per-SKU catalog images on Etsy, eBay, Poshmark, Mercari, Depop, Amazon, or Shopify, AI model image generation is the dominant cost-effective workflow in 2026.

Can I use AI-generated model images on Etsy, Amazon, eBay, or Shopify?

Yes for Etsy, eBay, Poshmark, Mercari, Depop, Vinted, Shopify, and Whatnot — AI on-model images are accepted as main and gallery images. Amazon's main image policy still requires pure-white-background product shots with the product filling 85 percent of the frame, so AI on-model output goes into gallery slots 2 through 7; the Amazon main image stays a clean white-bg shot, which AI ghost mannequin tools also produce. Each marketplace's image policy is worth a quick read before going live.

How much do AI model image generators cost per image in 2026?

Free starter tiers exist on most platforms. Snappyit offers free credits to begin and prices scale with output volume. FASHN.ai's developer API runs around $0.075 per image. Photoroom and Modelia bundle model generation into broader monthly plans starting near $10 to $30. Claid is enterprise-leaning with custom pricing. WearView and SellerPic ship free entry tiers plus paid plans starting under $30 per month. A small brand or seller producing 50 garments a month typically lands at $20 to $50 in total tool cost; established brands and agencies with hundreds of SKUs scale to a higher monthly tier across these same tools.

Generate your first AI Fashion Model image in 90 seconds

Upload a single flat-lay or hanger photo and Snappyit AI Fashion Model returns a lifestyle on-model render with templated persona, pose, and scene. The same source can also export a ghost mannequin variant for marketplace main images and a jewelry try-on render. Free starter credits, no card needed.

Try Snappyit AI Fashion Model free →